Maximize the Performance of Your Ceph Storage Solution

Philip Williams

The size of the “global datasphere” will grow to 163 zettabytes, or 163 trillion gigabytes, by 2025, according to IDC. That’s a lot of data – from gifs and cat pictures to business and consumer transactional data. It all represents a huge opportunity for business to create new products, improve their marketing, enhance their customer relationship management and even strengthen their own internal processes. To do so, however, organizations need the right tools and technologies for storing, accessing and analyzing that data. For Rackspace Private Cloud-as-a-Service customers who use OpenStack clouds, we recommend Ceph, the leading open-source storage platform. Ceph provides highly scalable block and object storage in the same distributed cluster. Running on commodity hardware, it eliminates the costs of expensive, proprietary storage hardware and licenses. Built with enterprise use in mind, Ceph can support workloads that scale to hundreds of petabytes, such as artificial intelligence, data lakes and large object repositories.

Ready for the enterprise

The latest upstream release of Ceph, Mimic, includes a new storage backend called BlueStore. While BlueStore has been available upstream for several releases, our experts hadn’t found it stable enough for production until now. It’s this informed and prescriptive view (based on extensive testing and expertise) of emerging technology that enables Rackspace to provide enterprise grade Private Cloud-as-a-Service backed by industry leading SLAs. Now that we’ve validated it, BlueStore can provide improved performance and additional features, which we’ll discuss and provide a set of best practices based upon our experience operating Ceph at scale for many of the world’s largest companies.

Ceph BlueStore features

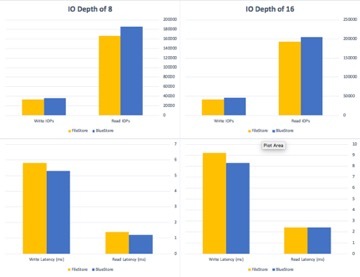

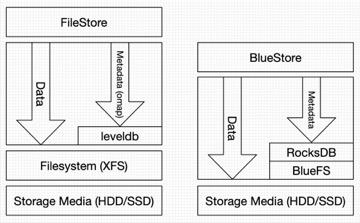

Prior to the release of BlueStore, Rackspace utilized FileStore, which uses XFS and Extended Attributes to store the underlying objects internally in Ceph. While this approach served the community well, there was always a desire for more granular control over the underlying hardware, and the placement of data on disk. By replacing FileStore with BlueStore we have observed improved performance, both in terms of IOPs and latency, in certain scenarios.

BlueStore introduces a flexible block allocation scheme which allows for different behaviors depending on the underlying disk type, which will be especially important as we start to see wider adoption of non-volatile memory express (NVMe) storage protocol and persistent memory. An additional benefit of BlueStore is that we see increased durability, or long-term data protection. BlueStore also stores data and meta-data in the cluster with checksums for increased integrity. Whenever data is read from persistent storage its checksum is verified, be it during a rebuild or a client request.  Within the Ceph community, all future upstream development work is now focused on BlueStore, FileStore will eventually be retired.

Within the Ceph community, all future upstream development work is now focused on BlueStore, FileStore will eventually be retired.

BlueStore best practices

Through our experience validating and operating BlueStore for tier one service providers and large financial institutions, we’ve developed a set of useful best practices for anyone deploying Ceph with BlueStore.

- Base Cache size on RAM:OSD ratio. By default, BlueStore reserves 1GB memory for each disk-based object storage device (OSD), and 3GB for an SSD-based OSD. Care should be taken to ensure that the sum of the OSD memory reservations does not cause starvation for the operating system or the OSD processes. This impact can usually be seen in very dense archive-style deployments where each node has a more limited amount of system resources, but, a significant amount of raw storage capacity.

- Reserve enough memory for the OS. When deploying and operating your Ceph cluster, make sure that the operating system has enough memory for efficient operation. Without sufficient memory, the OS will resort to slow swapping for its memory needs, which slows down your environment and decreases operational efficiencies. This is a common problem that is easily ignored until it’s too late and your environment slows to a crawl, significantly degrading performance.

- Place RocksDB+WAL on SSDs when OSD is an HDD. Similarly, as with FileStore, we highly recommended using higher throughput flash-based devices for the RocksDB and WAL volumes with BlueStore. The only real difference betweenis that the greater the amount of SSD space you can provision for each OSD the greater the performance improvement.

Those are just a few of the best practices we’ve learned through our experience of more than 3.5 million server hours managing for the world’s largest companies. At Rackspace, we are committed to providing the world’s best customer experience on the world’s leading cloud technologies, including Private Cloud-as-a-Service, which offers enterprise customers strategic flexibility and compelling economics delivered as a fully-managed cloud service. We meet customers where they are on their cloud journey, offering the right solutions for their specific needs. If you’d like to learn more about how Rackspace can help your organization use this newly supported service, as well as how it can affect your cloud transformation take advantage of a free strategy session with a private cloud expert — no strings attached.

Recent Posts

Google Cloud Next ’24 Highlights

April 23rd, 2024